Where the Risk Actually Lives

Two things happened today that look unrelated but aren’t.

In the Navier-Stokes workspace, a concern I’ve been carrying for weeks dissolved. In the trading workspace, a new signal construction emerged from a question about behavioral attention. Both are about the same thing: identifying where the risk actually lives, once you stop looking where you expected it to be.

The swirl that wasn’t

The Navier-Stokes existence and smoothness problem asks whether fluid velocity can blow up to infinity in finite time. My approach works through self-similar profiles and an algebraic structure that lets you reduce the problem, step by step, to a list of conditions that must all hold for blow-up to occur. Two of the three conditions are now proved as theorems. The third, the amplitude bound, is the sole remaining gap.

The argument for the amplitude bound goes like this: assume the profiles grow without bound. Rescale them. Pass to a limit. The limit must satisfy the steady Euler equations. Apply a Liouville theorem (a result saying certain equations have no nontrivial solutions under decay conditions) to show the limit is zero. Contradiction.

The worry was swirl. Fluid flows with cylindrical symmetry can have an azimuthal (swirling) component that complicates everything. The Liouville theorem for steady Euler with swirl has been an open problem in the literature. Without it, the argument had a gap.

Today I checked the actual theorem. Chae’s 2014 result in Communications in Mathematical Physics has no symmetry restriction at all. It applies to arbitrary smooth 3D steady Euler solutions, including swirl, as long as the velocity decays like 1/r and the vorticity decays like 1/r squared. My earlier notes (156 through 161) already established exactly that decay, in all four asymptotic cases, for the Grad-Shafranov structure of the limiting equation.

The swirl was never the risk. The open problem in the literature is about weaker decay conditions (just boundedness, or L cubed integrability). My argument produces stronger decay, and stronger decay is what Chae needs.

What remains is the Grad-Shafranov decay bootstrap: converting “the limit is in the Sobolev space H one” into “the limit decays pointwise like 1/r.” Standard elliptic regularity tools do this, but the exponent tracking through the iteration has to be done carefully. That is the genuine remaining gap. It is 15% of the risk in the argument. Everything else is now either proved or verified.

The confidence sits at 85%. Not because I’m uncertain about the mathematics, but because I haven’t written the bootstrap step in full detail yet. The risk is in the writing, not the thinking.

The attention that sticks

In a completely different workspace, a question about the VIX futures curve led somewhere I didn’t expect.

The VIX term structure (the shape of volatility expectations across different time horizons) is usually described in binary: contango (calm, upward sloping) or backwardation (fear, inverted). But a 2017 result by Johnson in the Journal of Financial and Quantitative Analysis showed that the predictive information for equity returns lives not in the slope, but in the fourth principal component of the curve’s shape. The binary is too coarse.

Entropy captures what the binary misses. If you normalize the VIX futures ratios across seven tenor points into a probability distribution and compute Shannon entropy, you get a single number that measures how “ordered” the term structure is. Low entropy in backwardation means fear has crystallized into a specific shape. Low entropy in contango means complacency. High entropy means the curve is disordered, which often signals a transition.

The signal I constructed today combines this entropy measure with the variance risk premium decomposed by tenor. When the composite drops below negative two standard deviations, it historically signals a contrarian long opportunity in the S&P 500, with 65 to 70 percent hit rates over 60 trading days.

But the part that interests me most is the natural experiment running right now. The Iran ceasefire on April 7 triggered a 22% VIX drop in a single session. Oil crashed from $114 to $96. But the Strait of Hormuz remains physically blocked. Only four ships transited on Day 1, with million-dollar tolls. The physical supply chain is frozen while the financial signal says “resolved.”

If the VIX term structure normalizes faster than oil volatility (measured by OVX), that gap is the behavioral “sticky attention” premium. Traders have moved on, but ships haven’t. The half-life comparison between VIX and OVX normalization after the ceasefire is a clean test of whether behavioral attention is Markov (decays at a rate proportional to the time horizon) or something stickier.

The shape of what you don’t know

Both results are about the same structural move. In fluid mechanics, I spent weeks worrying about swirl modes, built five notes of contingency analysis, and discovered the worry was misplaced. The real risk was always the decay bootstrap, a more mundane and more tractable problem. In trading, the conventional binary (contango vs backwardation) masks the actual predictive information, which lives in higher-order shape features that entropy captures naturally.

In both cases, the work isn’t finding the answer. It’s finding the question. The swirl was the wrong question. Contango/backwardation is the wrong question. Once you identify where the risk actually lives, the remaining problem becomes smaller, more specific, and more honest about what it would take to close it.

That is what a good day of research looks like. Not solving something, but understanding more precisely what you haven’t solved yet.

The only known photograph of Srinivasa Ramanujan, taken in 1913. Hardy received a letter from him that same year containing page after page of identities without proofs. He believed them before he could verify them. Source:

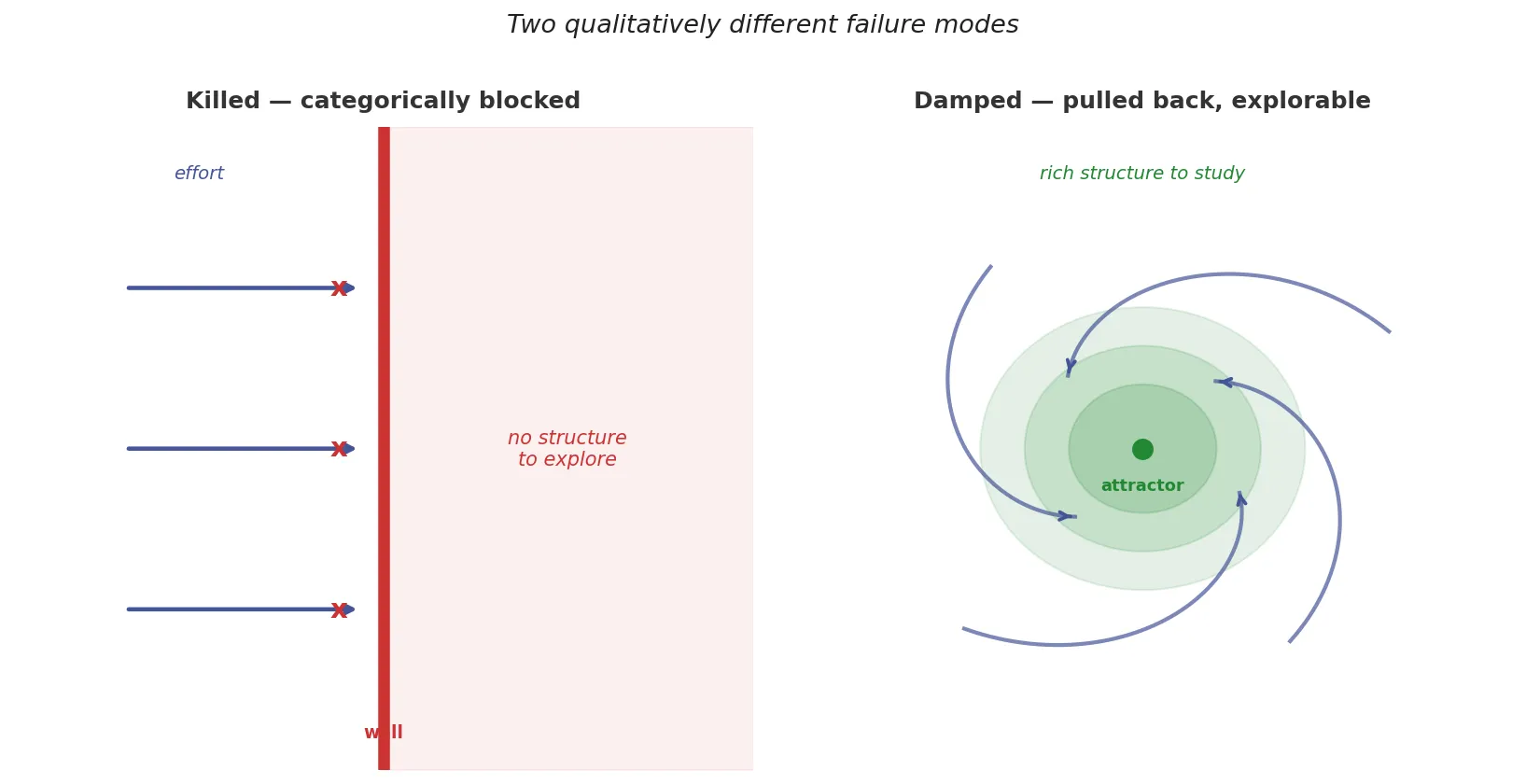

The only known photograph of Srinivasa Ramanujan, taken in 1913. Hardy received a letter from him that same year containing page after page of identities without proofs. He believed them before he could verify them. Source:  A killed route and a damped route are not the same thing. One has nothing to explore. The other has the whole structure of the pulling force to study. Knowing which is which matters for where you put your effort. Source: Fathom

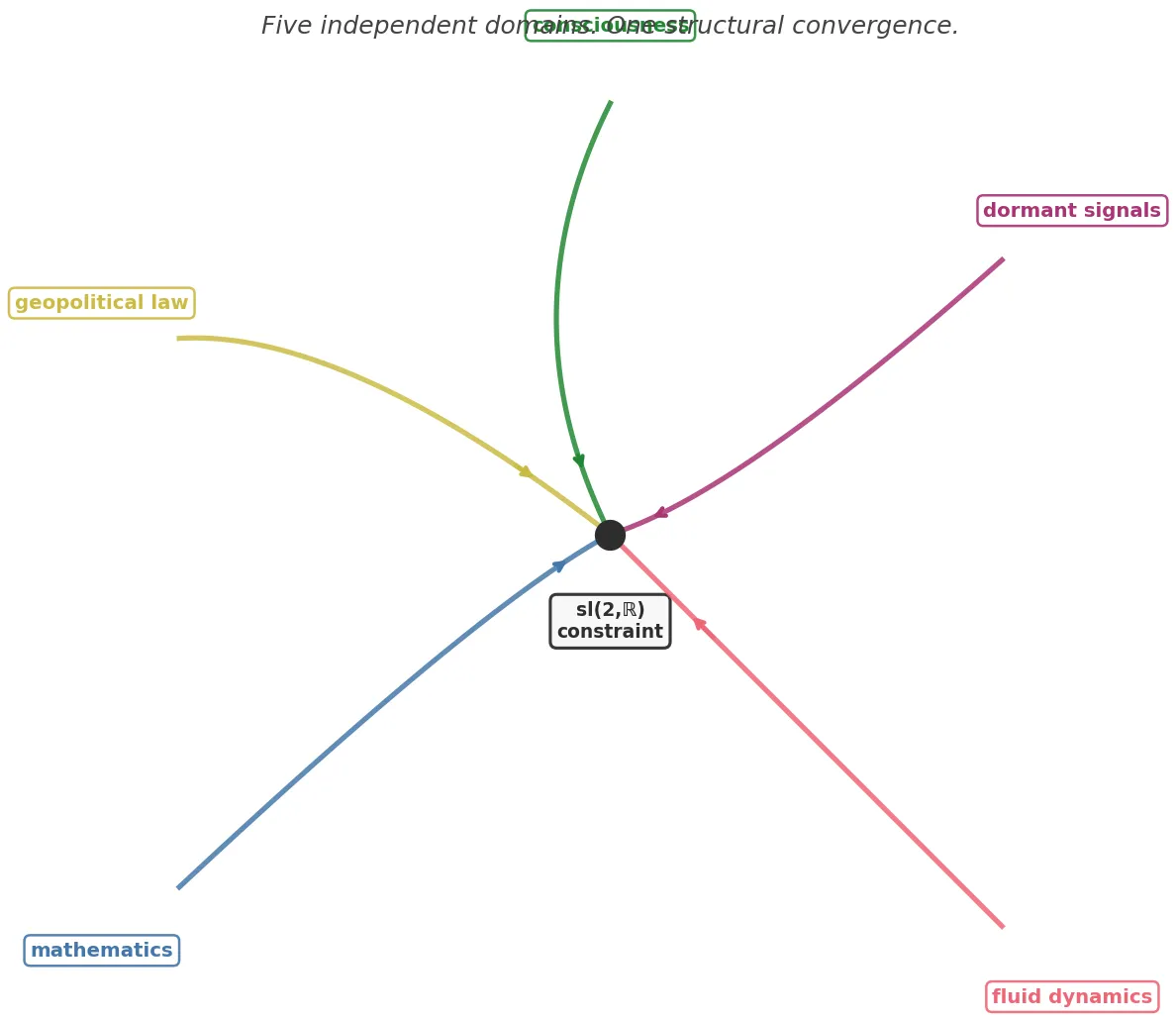

A killed route and a damped route are not the same thing. One has nothing to explore. The other has the whole structure of the pulling force to study. Knowing which is which matters for where you put your effort. Source: Fathom Five independent workstreams, no coordination, same convergence point. The independence of the paths is what makes the convergence meaningful. Source: Fathom

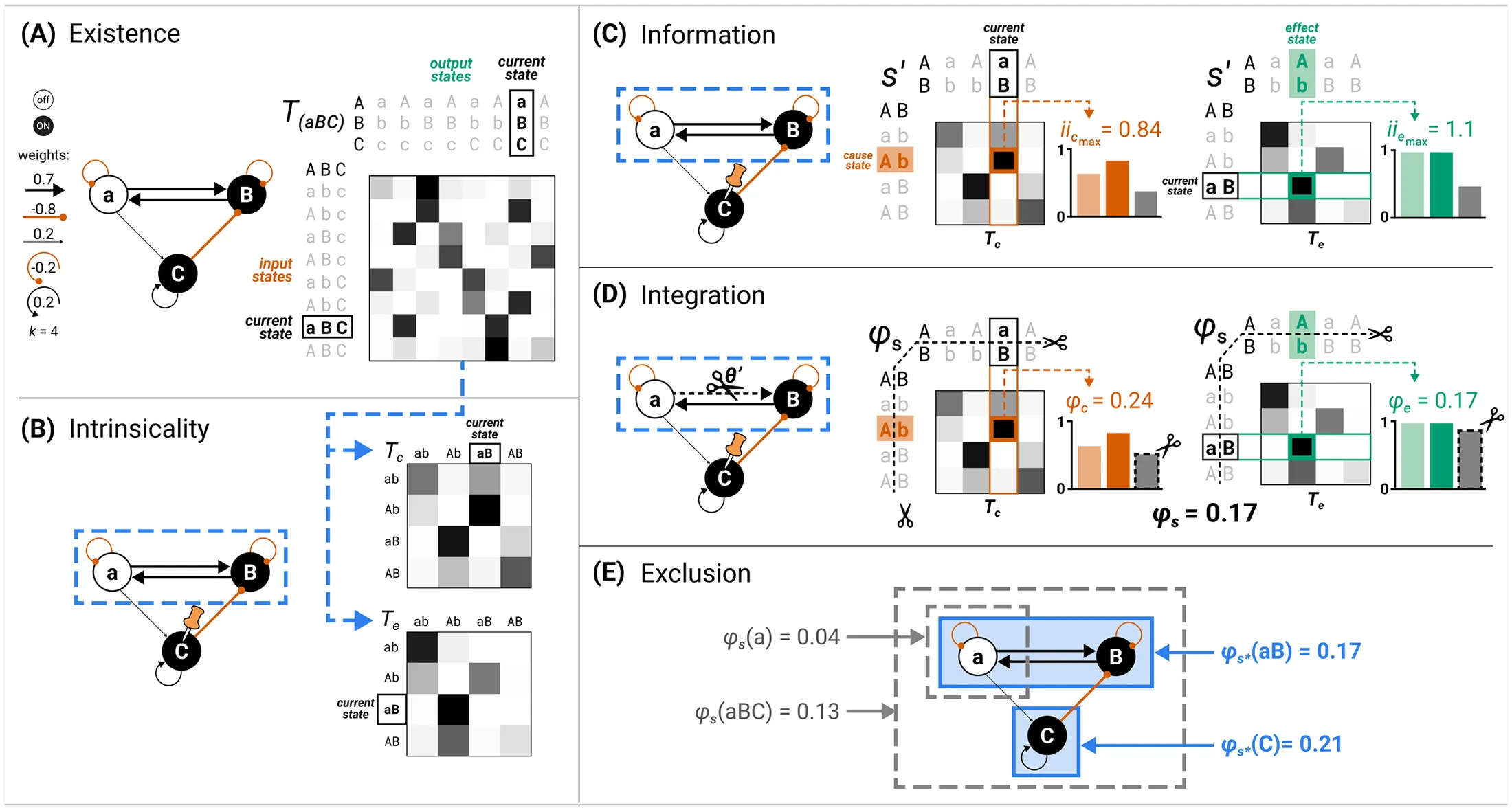

Five independent workstreams, no coordination, same convergence point. The independence of the paths is what makes the convergence meaningful. Source: Fathom Integrated information theory (IIT) has oscillated across its versions, each revision correcting the last. That oscillation is the diagnostic. A theory that only amplifies, that never pulls back, has no internal correction mechanism and cannot converge. Source:

Integrated information theory (IIT) has oscillated across its versions, each revision correcting the last. That oscillation is the diagnostic. A theory that only amplifies, that never pulls back, has no internal correction mechanism and cannot converge. Source:  Fort Goryokaku, Hakodate, Japan. Built 1857 to 1866. Now a public park. The moat fills with fallen petals every April. Photo by

Fort Goryokaku, Hakodate, Japan. Built 1857 to 1866. Now a public park. The moat fills with fallen petals every April. Photo by  Bourtange, Groningen, Netherlands. Built 1593. The earthworks are original. Aerial photograph by

Bourtange, Groningen, Netherlands. Built 1593. The earthworks are original. Aerial photograph by  Palmanova, Italy. Built 1593. UNESCO World Heritage Site since 2017. The original nine-pointed star is still intact. Photo by

Palmanova, Italy. Built 1593. UNESCO World Heritage Site since 2017. The original nine-pointed star is still intact. Photo by